Thompson Geller Count the Number of Duplicate Lines with uniq Thompson Geller $ sort -fs names-list.txt | uniq -i The stability of the sorting method used can affect the final output generated. Sort and uniq provide the ability to ignore case differences when dropping duplicate adjacent lines. Thompson Geller Ignore Case Differences when Removing Duplicate Lines with uniq The -u option for sort achieves the same result: $ sort -u names-list.txt To remove duplicate adjacent lines in a file, send the output of sort to the uniq command, as in the following example (using the above example): $ sort names-list.txt | uniq The -s option in the second command stabilizes the sort, ensuring that identical lines are output in the same order in which they occurred in the input. Thompson Geller $ sort -fs names-list.txt The default sorting algorithm used by sort is unstable because lines judged to be identical may be printed out of order with regards to their original place: $ cat names-list.txt The -f option for sort forces sort to ignore the case of a letter when ordering lines. Gil Watson $ sort -R names-list.txt -random-source=/dev/urandomĪaron Smith Ignore Case when Reordering with sort Consider the output of the following commands: $ sort -R names-list.txt -random-source=/dev/random If you prefer, sort can scramble a list using the system’s random number generator /dev/random or pseudo-random number generator /dev/urandom. Identical lines are always printed adjacently to each other. The pseudo-random order is determined by using a cryptographic hash of the contents of lines, which produces a fast shuffle. Sort can scramble the order of lines using the -R option: $ sort -R names-list.txt You can reverse the order of sort output with the -r option, as follows: $ sort -r names-list.txtĪaron Smith Scramble List Order with sort Capital letters are ordered after lower case letters. Sort simply reorders the list alphabetically and outputs the sorted list to the standard output. Joni Governor Reorder Lists with sort $ sort names-list.txt The examples in this section will use the following text file as an input: Because uniq only removes identical adjacent lines, it is often used in conjunction with sort to remove non-adjacent duplicate lines. The uniq command takes input and removes repeated lines. Here, the sorted output is written to the ~/retired-roster.txt file.

To write this content to a file, redirect the output as in the following example: grep -i "retired" ~/roster.txt | sort > ~/retired-roster.txt In the default configuration, this sort prints the output on the terminal.

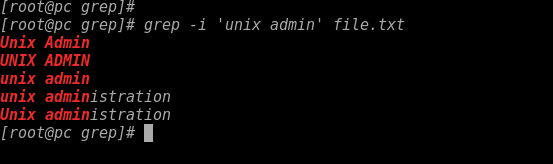

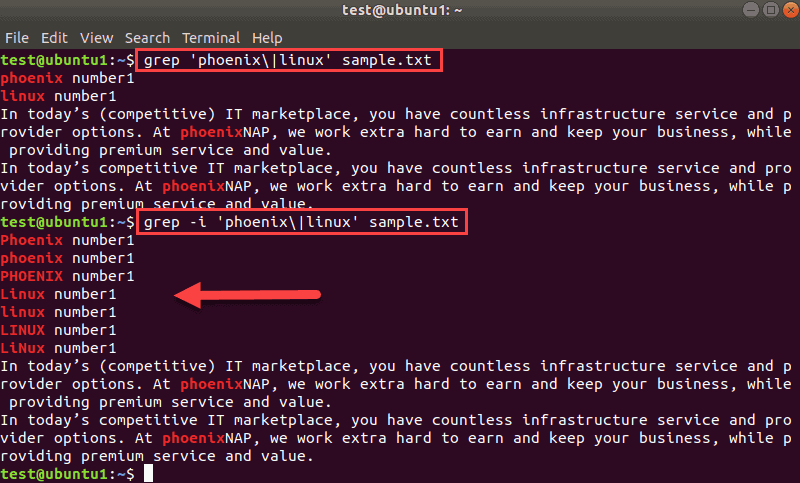

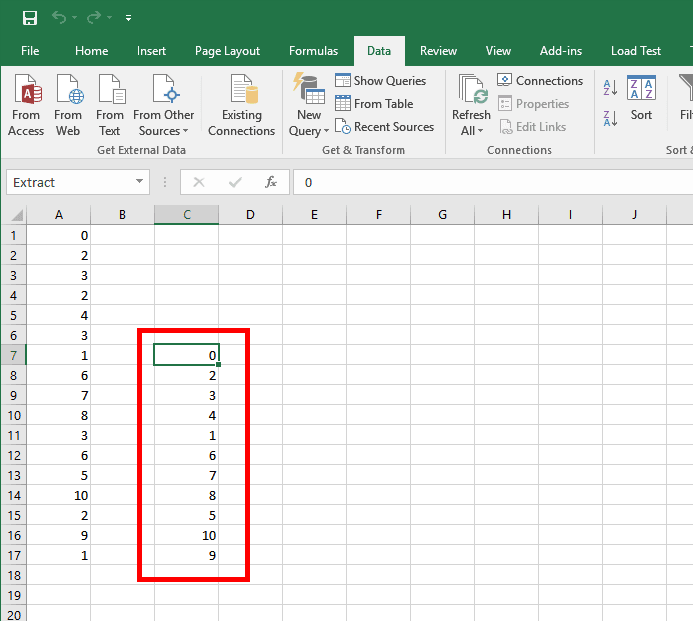

These results are sent to sort, which reorders this output alphabetically. This uses grep to filter the ~/roster.txt file for the string retired, regardless of case. Sort also accepts input from other commands as in the following example: grep -i "retired" ~/roster.txt | sort sort commands take the following format: sort ~/roster.txt Sorted text is sent to standard output and printed on the terminal unless redirected. The sort command accepts input from a text file or standard output, sorts the input by line, and outputs the result. Though narrow in their focus, both of these tools are useful in a number of different command line operations. The uniq command takes a list of items and removes adjacent duplicate lines. The sort command takes a list of items and sorts them alphabetically and numerically. $ grep '9791' Test_2_sort_0.The Linux utilities sort and uniq are useful for ordering and manipulating data in text files and as part of shell scripting. Running $ sort Test_2.txt > Test_2_sort_0.txt and then using grep | uniq on Test_2_sort_0.txt did almost return the expected output. Running $ grep '9791' Test_grep_uniq_sort.txt | uniq -c gave this result 1 AB-9791_Fooġ DE-9791_Bar // expected: 4 actual: 1, 2, 1ģ AB-9791_Foo // expected: 4 actual: 1, 3 I copy und pasted each AB-9791_Foo line so they should be identical. In my test file Test_2.txt these lines are written AB-9791_Foo What tool is usefull ( grep, awk, sed, or other) to achieve the second part to group and count? Update with test records This answer uses perl but perl is not installed on our machines. This result should be grouped and counted (like SQL Group by Count) like this AB-9791_Foo 2 Using $ grep "9791" myFile.txt gives this result AB-9791_Foo I want to filter all lines from a file that contain mySearchString and after that group them together and count them.Įxample find all lines that contain 9791 AB-9791_Foo

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed